How Sentiment Analysis Can Help Prevent Self-Harm

Published:

Data, when leveraged, can be very powerful.

When searching for terms related to self-harm (don’t do this folks), search engines like Google are able to recognize the potentially harmful nature of those terms and respond accordingly: by displaying helpful resources such as suicide hotlines, counseling services, or medical support.

But how does Google identify that these terms may be harmful?

The answer is sentiment analysis.

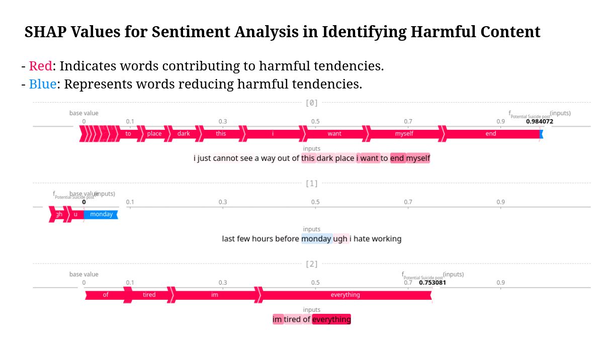

This is a technique in Natural Language Processing (NLP) where machine learning models are trained to understand the emotional tone of a piece of text. These models are usually trained on large datasets labeled as positive, negative, or neutral. But in sensitive applications, like detecting harmful or suicidal thoughts, the models are further trained on special data to recognize high-risk emotional cues, like despair or hopelessness.

So when someone types a search query like “I want to disappear” or “how to end it all,” the model doesn’t just see words. It sees patterns (emotional signals in the language) that match with examples of self-harm or distress it has seen before. Once detected, the system can respond immediately, for example, by showing links to helplines or offering a prompt to get help.

This isn’t magic. It’s just good data, smart modeling, and a very important use case of machine learning.